Introducing AudioMass (https://audiomass.co) an open-source web based audio and waveform editing tool.

AudioMass lets you record, or use your existing audio tracks, and modify them by trimming, cutting, pasting or applying effects, from compression and paragraphic equalizers to reverb, delay, repair tools, pitch/speed profiles and multitrack mixdown. AudioMass also supports hotkeys, offline use and a responsive interface so quick edits stay quick. it is written solely in plain old-school javascript, weighs under 100kb compressed and has no backend or framework dependencies.

It also has very good browser and device support.

:: Feature List ::

- Loading Audio, navigating the waveform, zoom and pan

- Visualization of frequency levels

- Peak and distortion signaling

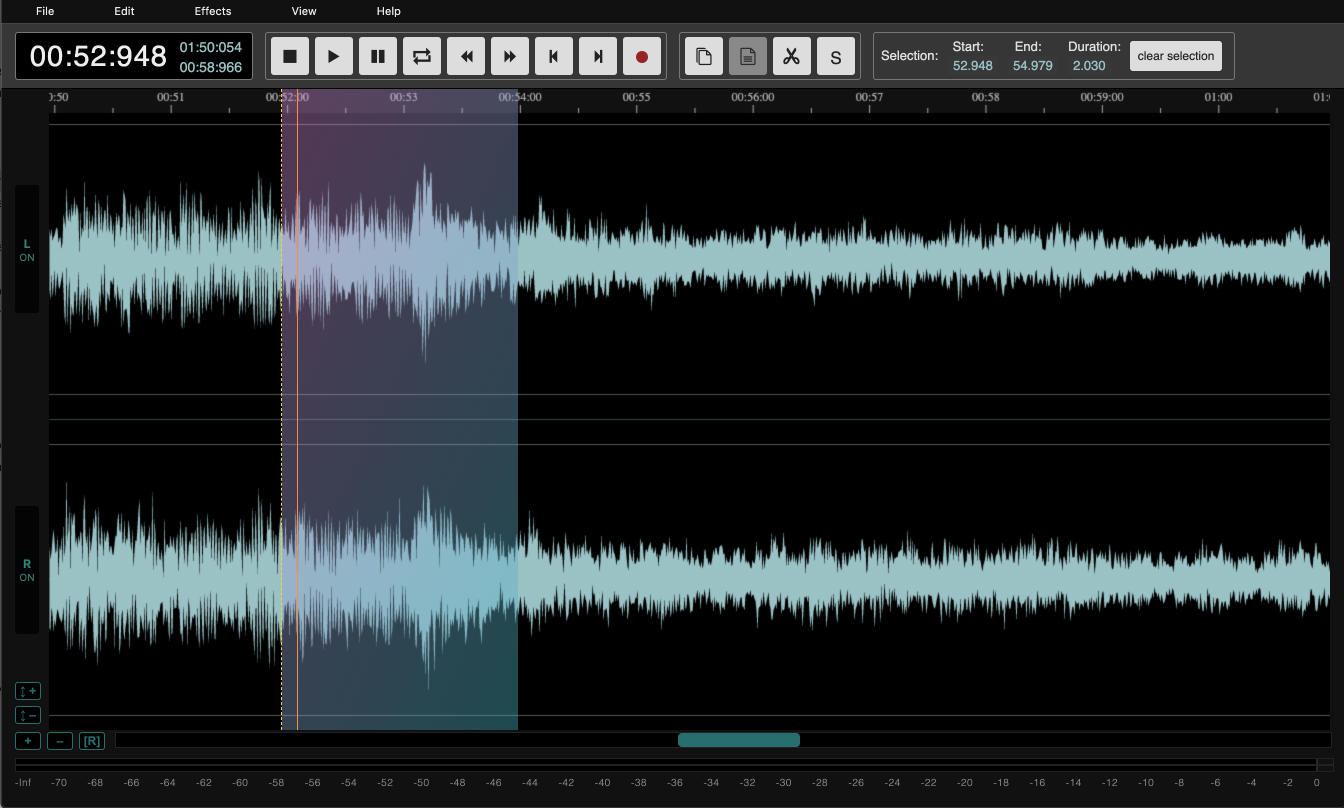

- Cutting/Pasting/Trimming parts of the audio

- Inverting and Reversing Audio

- Zero-crossing selection tools

- Markers / trackers for cue points and quick jumps

- Automatic beat detection, beat bars and snap-to-beat

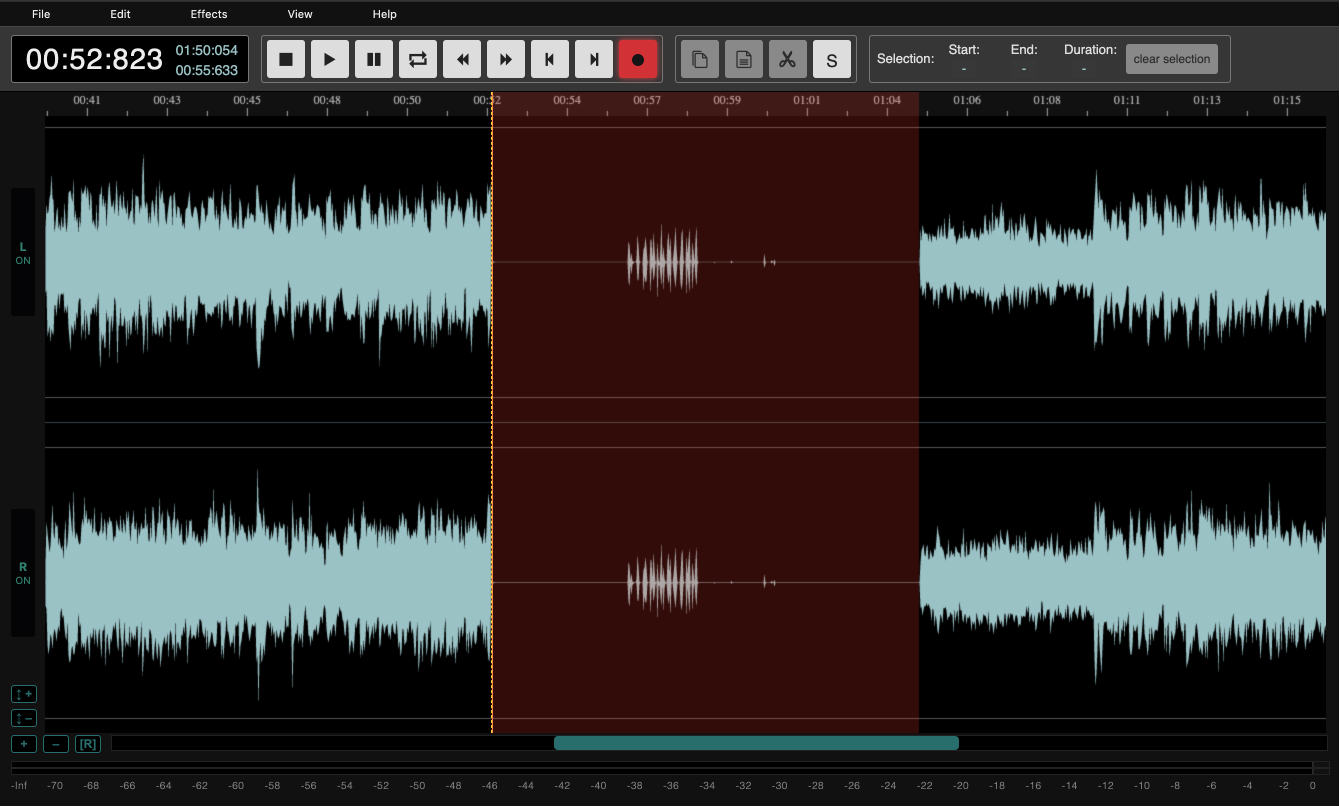

- Recording Audio

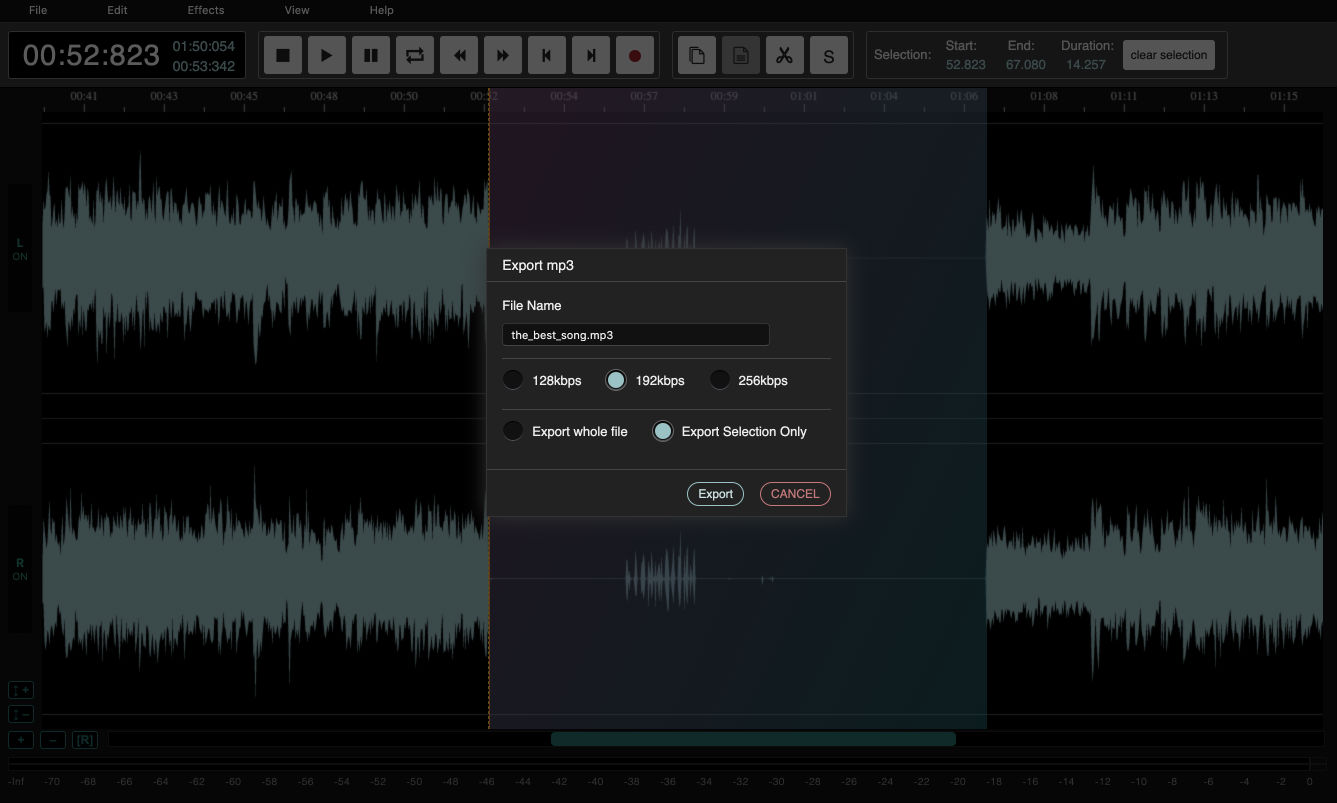

- Exporting to mp3

- Modifying volume levels

- Fade In/Out

- Compressor

- Normalization

- Reverb

- Delay

- Distortion

- Pitch Shift

- Graph-based pitch / speed profiles

- Click, hum and edit repair

- Creating seamless loops with crossfade preview

- Keeps track of states so you can undo mistakes

- Offline support!

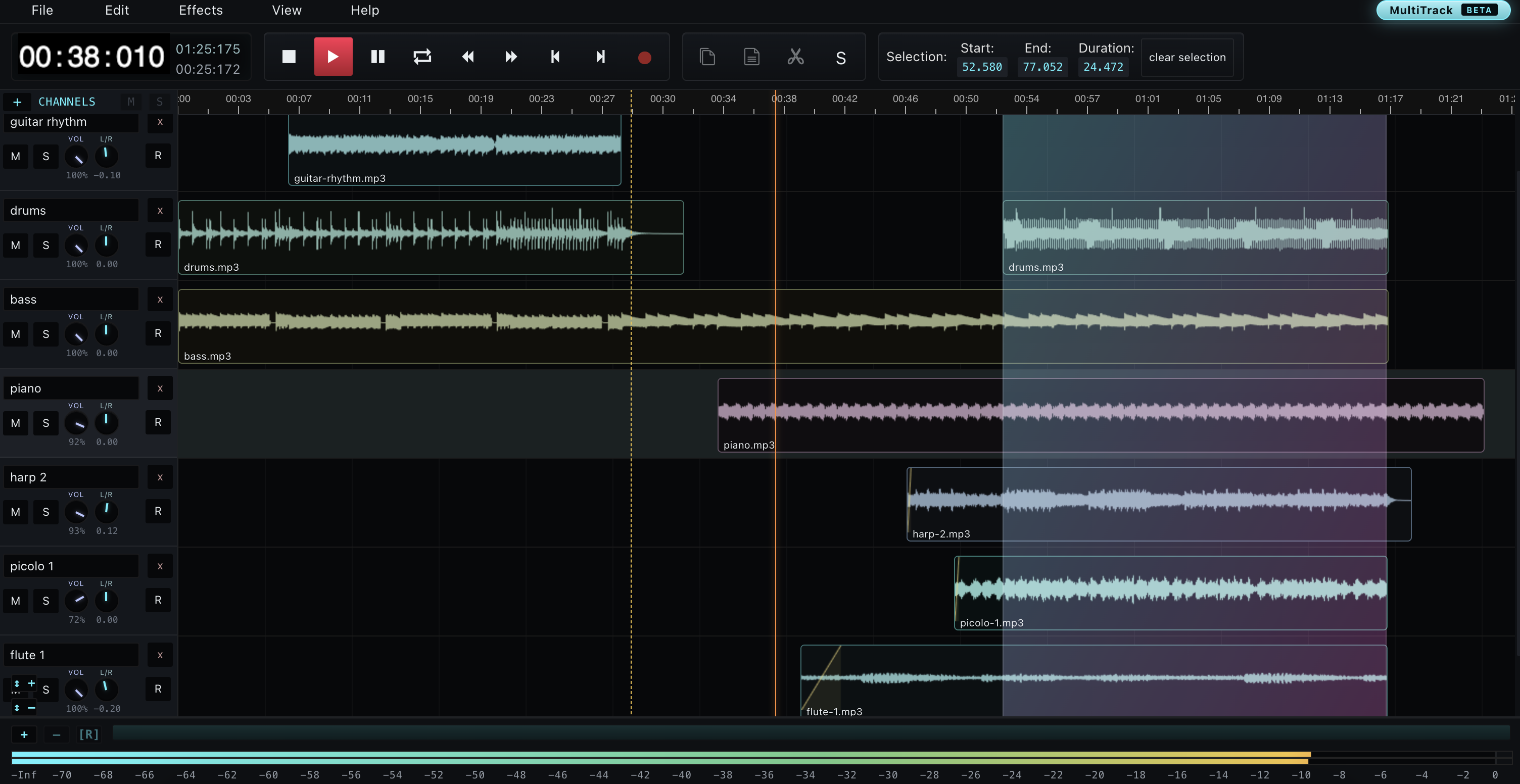

- Multitrack editing and mixdown

- Recent changelog

And all this, still under 100kb of JS!

Getting Started

To get started, drag and drop an audio file, or try the included sample.

Once the file is loaded and you can view the waveform, zoom in, pan around, or select a region.

Waveform Tools

The main editor is built around fast region editing. Select part of the waveform to cut, copy, paste, trim, silence, reverse, invert, or process only that section. The display also includes frequency visualization, peak/distortion signaling and zero-crossing selection, so you can spot obvious problems and avoid rough edit points while editing.

Markers / Trackers

Markers are little named trackers you can drop on the waveform when a spot matters. Press [M], double-click the top ruler, or right-click and choose Add Marker Here. You can drag them around, rename or delete them, jump between them with [ and ], or hold [Shift] while jumping to quickly turn the space between markers into a selection. Simple, tiny, and very useful when you are editing something longer than a ringtone.

Beat Tools

AudioMass can detect a rough tempo, draw metronome beat bars and snap edits to those beats. It is a small helper for loops, repeats and timing fixes, without turning the editor into a full DAW screen when you do not need one.

Effects And Processing

AudioMass includes the usual quick processing tools: gain, fade in/out, compressor, normalization, reverb, delay, distortion and pitch shifting. There is also a graph-based pitch/speed profile for ramps and Doppler-style moves, plus repair tools for clicks, hum and hard edit points. Effects can be previewed before applying, and most of them work on either the current selection or the whole file.

Creating Seamless Loops

Select a region and use Edit > Seamless Loop, or right-click the waveform and choose Seamless Loop. AudioMass opens a small loop preview where you can adjust the crossfade, trim silence from the edges, snap to smoother zero crossings, click around the preview to test the loop, repeat it, then apply it back to the file or open it in a new editor tab.

Undo And Offline

AudioMass keeps an undo stack for edits, so you can experiment without committing every change permanently. It also runs locally in the browser with no backend, and can keep working offline after it has been loaded.

Recording Audio

To record audio, simply press the Recording button, or the [R] key.

Exporting to mp3

In order to export back to mp3, click on 'File', then 'Export to mp3', and follow the modal's instructions.

The story behind AudioMass. And a short rant on web interfaces.

I wrote AudioMass back in June 2018 and it stayed dormant on my hard disk until I decided to share it with the world today (July 13th, 19, but you might be seeing this in 2020. Hi!!). It started as a personal small tool for quick visualization of waveforms. Later I added the ability to cut/copy/paste as well as fade in and out. And soon after good 'ol feature creep and perfectionism took over! Soon after, it turned to a challenge to see how close to a full-featured waveform editor it can get, whilst maintaining acceptable performance and small filesize.

In general I am a big fan of the interfaces of DAWs (Digital Audio Workstations), they are extremely, complex, intricate, versatile, and they manage to remain visually pleasant even through their infinite options and knobs. Many times I feel the web has taken a very wrong turn, as amazing interfaces such as...

Sonar

Fruity loops

Existed for more than 10-15 years now, while we are struggling with animating some rectangles for 60fps... So for AudioMass I wanted to try and make a fast and performant interface. Drawing inspiration from the examples I mentioned earlier rather than the tradiional web development practices. This is my unconvincing but truthfull excuse as to why the code is ugly; it is focused on being fast and getting the job done, with little regard on structure.

For the record: AudioMass was started in 2018, multitrack was added but never deployed in 2022, and to this day the thing is still about 90% handcrafted using older web technologies and a slightly old-school coding style. I keep working on it mostly because it is fun, which, in 2026, I have been reliably informed is still a valid reason to write software :)

Going forward I would like to slowly clean up the multitrack logic and redo the rendering fully in canvas (or maybe WebGPU, we'll see), and, if I can pull it off, try to elevate AudioMass into something that stands a little closer to a proper professional audio workflow on the web. It is also not particularly well-optimized for mobile right now, but hopefully that is something that can be smoothed out with time.

Building the interface

Let's say we have a "PLAY" button and when we press it the track begins to play. I suppose we would want the button's color and state to reflect that the track is now playing. So naively we would do something like;

btn.onclick = function () {

this.classList.add ('active');

};But what happens if we have a hotkey that triggers the same action? Let's say we press [SPACEBAR] and the track begins to play. Do we modify that button's class in the spacebar's handler?

document.onkeypress = function ( e ) {

if ( e.keyCode === 32) {

e.preventDefault ();

document.querySelector ('.playbtn').classList.add ('active');

}

};And what happens, if there are 2 buttons, or one gets dynamically removed? Do we do selectAll and iterate? Hmmm...

And if the track is playing and we hit [SPACEBAR] or the play button again, we need to stop playing. What do we do then? You can see how this becomes messy very quickly as everything gets very tighlty coupled together in a big dependency ball.

Introducing the observer pattern. Actions are represented by events. So the above logic and be expressed as;

btn.onclick = function () {

FireEvent ('RequestTogglePlay');

};

On ('WillPlay', function () {

btn.classList.add ('active');

});

On ('WillStop', function () {

btn.classList.remove ('active');

});

document.onkeypress = function ( e ) {

if ( e.keyCode === 32) {

e.preventDefault ();

FireEvent ('RequestTogglePlay');

}

};

On ('RequestTogglePlay', function () {

if (track.is_playing) {

FireEvent ('WillStop');

track.stop ();

}

else {

FireEvent ('WillPlay');

track.play ();

}

});

track.onPlayStart = function () {

FireEvent ('DidPlay');

};

track.onPlayStop = function () {

FireEvent ('DidStop');

};

Now this is completely decoupled and dependency free! The button will set its state according to the events it receives, and both the button and the spacebar key rely on the same mechanisms.

You may notice the vocabulary we are using. "Request", "Will" and "Did". These are arbitrarilly chosen to impose some extra structure.

"Request" denotes intent to perform an action, it is not guaranteed that the action will execute as there might be conditions preventing it (eg unitialized or still loading objects). "Will" means that the conditions passed and we are attempting to perform the action. And "Did" means that the action just got performed.

It might be a bit too verbose, but it worked very well for structuring AudioMass's interface.

Dockable UI

One thing I love about DAW interfaces, is that every window can be pulled out of the main host. I fondly remember having 3 screens full of VST plugins. So can we do the same in the browser?

Yes! And it is using some of the oldest tricks in the book. Essentially we create a pop-up window with window.open and just pass buffers to its documentWindow object. Surprisingly it is quite performant on all browsers except IE Edge. I believe they are serializing in ascii or base64 every packet or something. Also Chrome has an interesting bug where you can't pass more than 512 byte buffers.

So for the undocked window, we call window.open. But how do we make it work when it is docked? It would be quite cumbersome to write the same functionality twice, once as a standalone page, and once as an in-page script. Luckily we can avoid that completely, and re-use the same page by using iframes.

The only difference is that the undocked version uses window.opener to refference its parent, whereas the iframe uses window.parent.

Multitrack (back in 2026!)

So remember when I said the next big feature was going to be multitrack support? Well, it is finally here. AudioMass now has a proper multitrack mode where you can layer multiple tracks, mix them together and bounce the whole thing back down to a single file. It is essentially a small DAW bolted on top of the regular waveform editor, sharing the same engine, the same hotkeys and the same effects.

Hit the MultiTrack button up in the top header and you get a fresh session with a couple of empty channels. Drag and drop audio files onto a track, or anywhere on the canvas really, and they will land where you dropped them. Each file becomes a clip that you can grab and slide around freely on its lane.

Clips can be trimmed by dragging their edges, faded in or out by pulling the little corner handles, split at the playhead with [Ctrl/Cmd + X], copied, duplicated, renamed and deleted. When two clips on the same track overlap, AudioMass automatically draws a crossfade in the overlap region so your transitions stay smooth instead of clicking. The crossfade follows the clips around. Drag either side and the curve readjusts on its own.

Each track header has the usual suspects: volume, pan, mute, solo and record-arm. The little round knobs are continuous (drag up/down) and double-clicking resets them. There is also a separate Mixer view if you prefer the vertical-strips-with-big-meters look while you are mixing.

Recording works just like in the single-track editor, except it records onto whichever track is armed. You can punch in, monitor with the meters, and the moment you stop, the recorded blob immediately becomes a regular clip you can move and edit like an imported file. Multiple takes stack into the same track without losing previous ones.

Effects can be applied to the whole clip or to a region within it. Processing goes through an OfflineAudioContext, so it is non-destructive until you commit, and you can preview it live before applying. The undo stack covers every clip-level edit too, so you can experiment freely.

The whole session, tracks, clips, fades, knob values, the lot, can be saved out as a .amss file. It is just a wrapper around the raw audio plus some JSON describing the layout, compressed with LZMA. Drop it back in later and you pick up exactly where you left off. To export the final mix use Mixdown, and everything gets bounced through an offline render to a single wav or mp3, like any normal export.

Snapping to clip edges, the playhead and the region works while dragging, and the whole thing is reasonably mobile-friendly with touch gestures, pinch-to-zoom on the timeline and long-press context menus. It is not Pro Tools, but for arranging takes, building podcasts or stitching ideas together quickly in a browser tab, it gets the job done :)

:: Multitrack Keyboard Shortcuts ::

- [Space]: play / stop [Shift + Space]: pause

- [R]: toggle recording on the armed track

- [←] / [→]: seek (auto-accelerates the longer you hold)

- [Shift + ←] / [Shift + →]: jump to region edges / start / end

- [↑] / [↓]: select previous / next channel

- [Tab]: center the view on the playhead

- [Ctrl/Cmd + X]: split the selected clip at the playhead

- [X]: toggle a crossfade between overlapping clips

- [Ctrl/Cmd + C] / [Ctrl/Cmd + V]: copy / paste a clip

- [Backspace] / [Delete]: delete the selected clip

- [Ctrl/Cmd + Z] / [Ctrl/Cmd + Y]: undo / redo (covers every clip-level edit)

- [Ctrl/Cmd + A]: select the whole arrangement as a region

- [Ctrl/Cmd + S]: open the save / export menu

- [Esc]: close any open context menu

The Shift + key variants of the above (eg. Shift + C for copy) work too. They were the originals from before the Ctrl/Cmd bindings were added, kept around so old muscle memory doesn't break.

Recent Changelog

- July 2022: Multitrack mode with clips, fades, mixer controls, recording and mixdown.

- January 2026: .amss session files, so multitrack projects can be saved and opened again later.

- February 2026: Pitch / Speed Profile with graph editing, finer controls, Doppler-style presets and live preview seeking.

- March 2026: Automatic beat detection, metronome bars and snap-to-beat editing.

- April 2026: New repair tools for clicks, hum and hard edit points.

- May 2026: Seamless Loop with crossfade preview, silence trim, zero-crossing snap, repeat and open-in-new-editor.

- May 2026: Small UI polish passes, including precise time boxes, zero-cross selection mode and update-ready reload notices.

Future work and performance considerations

There is also a lot of room for improvement in almost all aspects.

First of all we can further reduce the filesize by around 20kb by removing the library we are using for rendering the waveform. We use only a fraction of its functionality so there is no reason to include it all.

We can also optimize a lot the rendering of the waveform. I heavily modified the library used to compute and draw only the visible range. However it is still clearing and re-drawing all of the canvas at each frame. We can take advantage of 2d Context's translate calls, and shift the canvas around instead of redrawing all of the pixels.

We can also move some operations to a background thread, such as the filters processing so that the UI does not freeze when applying a long chain of effects.

However, the biggest issue I encountered, is the Web Audio API itself. Every operation results in iterating over multiple long arrays per frame. Eventually the garbage collector fires and crackling is introduced. Only way to go around this is to use a small fftSize, but then the frequency range we have to work with is very narrow. Perhaps a pure WASM implementation would outperform trying to modify audio signals with JS. Only one way to find out I guess :)

Additionally, decodeAudioData provides no progress callback, and no way of cancelling it. So if you attempt to load a huge audio file, you will waste resources until it gets processed. There is no way around this currently and it can get annoying if you push a big file by mistake.